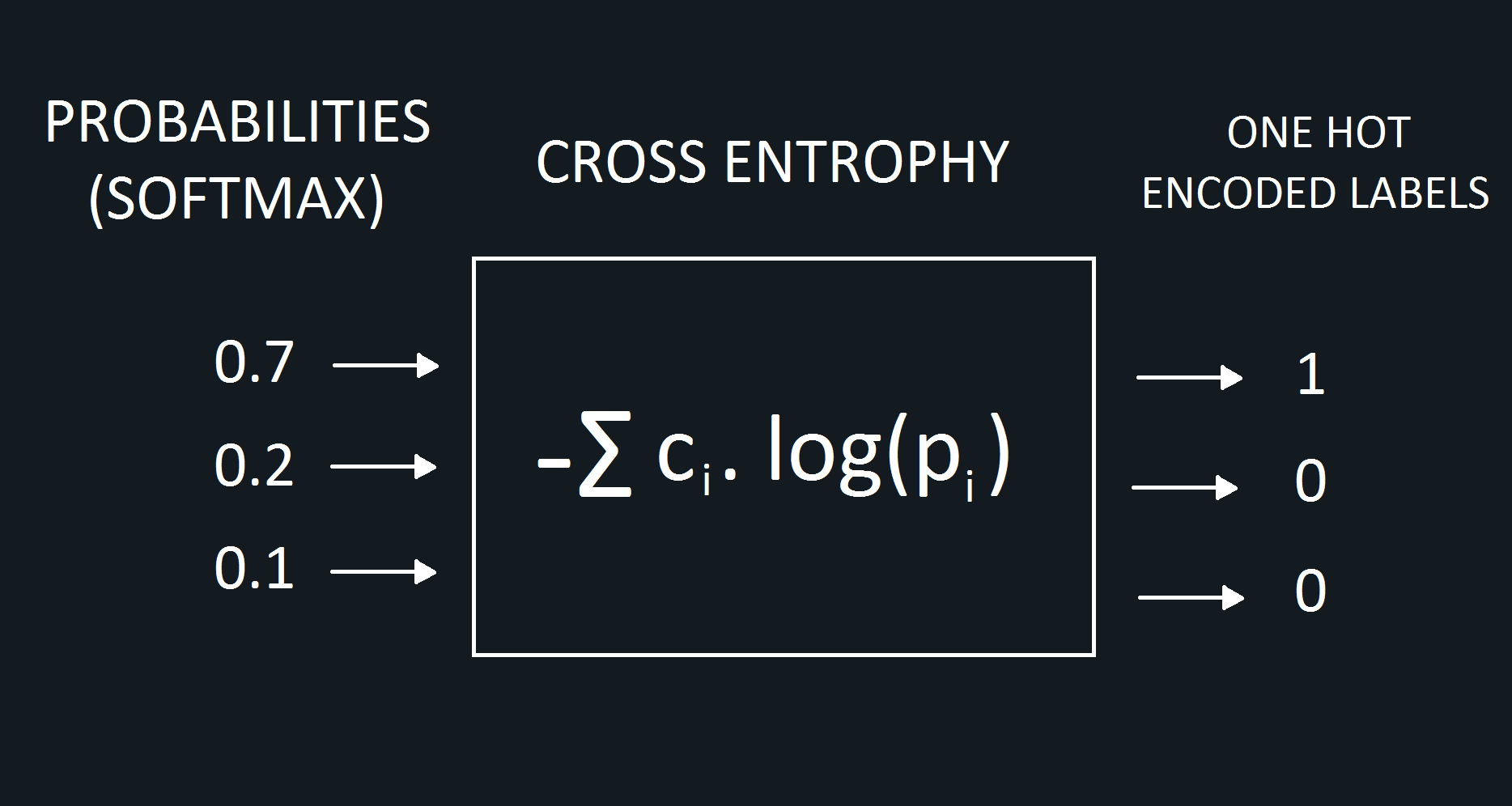

So, what’s the meaning of these two cross-entropies? They show the second loss is lower, therefore, its prediction is superior. Meanwhile, the cross-entropy loss for the second image is:Īs we already know, the lower the loss function, the more accurate the model. What about the cross-entropy of each photo? The loss for the first image would be: Following up on that, there is a 40% chance the photo is of a dog and 20% it is of a horse.

The first vector shows that, according to our algorithm, there is a 0.4 – or 40% – chance that the first photo is a cat. Imagine the outputs of our model for these two images are 0.4, 0.4, 0.2 for the first image and 0.1, 0.2, 0.7 for the second:Īfter some machine learning transformations, these vectors show the probabilities for each photo being a cat, a dog, or a horse. If we were to examine a picture of a horse, the target vector will be 0,0,1: The 0s mean it is not a cat or a horse, while the 0 shows it is, indeed, a dog. But how does it look in numerical terms? Well, the target vector t for this photo would be 0,1,0: They are all labeled images, with the label acting as the target. \ Cross-Entropy Loss: А Practical Example And, while the outputs in regression tasks, for example, are numbers, the outputs for classification are categories, like cats and dogs, for example. We use cross-entropy loss in classification tasks – in fact, it’s the most popular loss function in such cases. The higher the difference between the two, the higher the loss. We use this type of loss function to calculate how accurate our machine learning or deep learning model is by defining the difference between the estimated probability with our desired outcome.Įssentially, this type of loss function measures your model’s performance by transforming its variables into real numbers, thus, evaluating the “loss” that’s associated with them. What is Cross-Entropy Loss Function?Ĭross-entropy loss refers to the contrast between two random variables it measures them in order to extract the difference in the information they contain, showcasing the results. We’ll explain what it is and provide you with a practical example so you can gain a better understanding of the fundamentals. In this article, we’ll look at one of the types – namely, the cross-entropy loss function. Picking the right one is key as this way you ensure that you’re training your model correctly. There are different types of loss function and not all of them will be compatible with your model. It’s not, however, a one-size-fits-all situation. That is why loss functions are perhaps the most important part of training your model as they show the accuracy of its performance. But for that to happen, our models first have to have a high degree of accuracy. Whether it’s for business strategy or technological advancement, these techniques help us improve our decision making and future planning. We can represent this using set notation as ))$) is the information content of Q, but instead weighted by the distribution P.Machine learning and deep learning are becoming an increasingly important part of our lives. To take a simple example – imagine we have an extremely unfair coin which, when flipped, has a 99% chance of landing heads and only 1% chance of landing tails. Information I in information theory is generally measured in bits, and can loosely, yet instructively, be defined as the amount of “surprise” arising from a given event. If you’re feeling a bit lost at this stage, don’t worry, things will become much clearer soon.Įager to build deep learning systems? Get the book here As such, we first need to unpack what the term “information” means in an information theory context. Entropy is the average rate of information produced from a certain stochastic process (see here). This formulation of entropy is closely tied to the allied idea of information. However, for machine learning, we are more interested in the entropy as defined in information theory or Shannon entropy. The term entropy originated in statistical thermodynamics, which is a sub-domain of physics. For starters, let’s look at the concept of entropy. In this introduction, I’ll carefully unpack the concepts and mathematics behind entropy, cross entropy and a related concept, KL divergence, to give you a better foundational understanding of these important ideas. However, have you really understood what cross-entropy means? Do you know what entropy means, in the context of machine learning? If not, then this post is for you. If you’ve been involved with neural networks and have beeen using them for classification, you almost certainly will have used a cross entropy loss function.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed